Integers and Sequences Solution

This is the promised solution to the puzzle Integers and Sequences that I posted earlier. The puzzle is attached below.

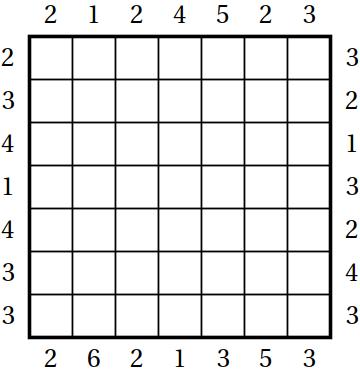

Today I do not want to discuss the underlying math; I just want to discuss the puzzle structure. I’ll assume that you solved all the individual clues and got the following lists of numbers.

- 12 42 18 40 30 24 20

- 2 1 132 42 429 14

- 7 9 1 8 5 3 10 4

- 92 117 70 145 35 1 22 12 5

- 137 1 37 13 107 1013 113

- 30 12 2 42 6

- 70 4030 836 7192

Since the title mentions sequences, it is a good idea to plug the numbers into the Online Encyclopedia of Integer Sequences. Here is what you will get:

- not clear

- Catalan numbers with 5 missing: 1, 1, 2, 5, 14, 42, 132, 429

- not clear

- Pentagonal numbers with 51 missing: 1, 5, 12, 22, 35, 51, 70, 92, 117, 145

- Primeval numbers with 2 missing: 1, 2, 13, 37, 107, 113, 137, 1013

- not clear

- Weird numbers with 5830 missing: 70, 836, 4030, 5830, 7192

Your first “aha moment” happens when you notice that the sequences are in alphabetical order and each has exactly one number missing. The alphabetical order is a good sign that you are on the right track; it can also narrow down the possible names of the sequences that you haven’t yet identified. Alphabetical order means that you have to figure out the correct order for producing the answer.

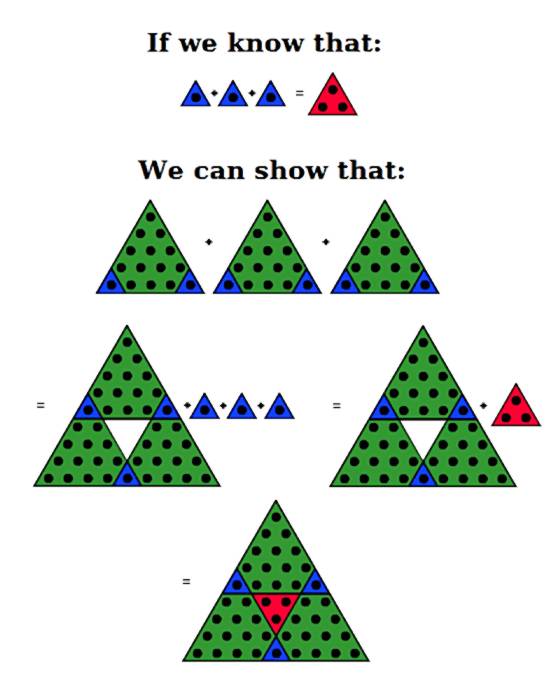

Did you notice that some groups above are as long as nine integers and some are as short as four? In puzzles, there is nothing random, so the lengths of the groups should mean something. Your second “aha moment” will come when you realize that, together with the missing number, the number of the integers in each group is the same as the number of letters in the name of the sequence. This means you can get a letter by indexing the index of the missing number into the name of the sequence.

So each group of numbers provides a letter. Now we need to identify the remaining sequences and figure out in which order the groups will produce the word that is the answer.

Let’s go back and try to identify the remaining sequences. We already know the number of letters in the name of each sequence, as well as the range within the alphabet. The third sequence might represent a challenge as its numbers are small and there might be many sequences that fit the pattern, but let’s try. The results are below with the capitalized letter being the one that is needed for the answer.

- abundAnt

- caTalan

- dEficient or iMperfect

- pentaGonal

- pRimeval

- proNic or proMic

- weiRd

What is going on? There are two sequences that fit the pattern of the third group and the sequence for the sixth group has many names, two of which fit the profile but produce different letters. Now we get to your third “aha moment”: you have already seen some of the sequence names before, because they are in the puzzle. This will allow you to disambiguate the names.

Now that we have all the letters, we need the order. Sequences are mentioned inside the puzzle. You were forced to notice that because you needed the names for disambiguation. Maybe there is something else there. On closer examination, all but one of the sequence names are mentioned. Moreover, with one exception the clues for one sequence mention exactly one other sequence. Once you connect the dots, you’ll have your last “aha moment:” the way the sequences are mentioned can provide the order. The first letter G will be from the pentagonal sequence, which was not mentioned. The clues for the pentagonal sequence mention the primeval sequence, which will give the second letter R, and so on.

The answer is GRANTER.

Many old-timers criticized the 2013 MIT Mystery Hunt. They are convinced that a puzzle shouldn’t have more than one “aha moment.” I like my “aha moments.”

*****

- (the largest integer n such that there exists a Platonic solid with n vertices, a Platonic solid with n edges, and a Platonic solid with n faces)

- (the largest two-digit tetrahedral number)/(the smallest value the second smallest angle of a convex hexagon with all integer degrees can have)

- (the number of positive integers less than 2013 that are divisible by 100, but not divisible by 70)

- (the number of two-digit numbers that produce a square when summed up with their reverse) ⋅ (the smallest number of weighings on a balance scale that guarantees to find the only fake coin out of 100 identical coins, where the fake coin is lighter than other coins)

- (the only two digit number n such that 2n ends with n) − (the second smallest, and conjectured to be the largest, triangular number such that its square is also triangular)

- (the smallest non-trivial compositorial number that is also a factorial)

- (the sum of the smallest three positive pronic numbers)

*****

- (the digit you get when you sum up the digits of 20132013 repeatedly until you get a single digit) − (the greatest common factor of the indices of the Fibonacci numbers divisible by 13)

- (the largest common divisor of numbers of the form p2 − 1 for primes p greater than three) − (the largest sum of digits that can appear on a 12-hour digital clock starting from 1:00 up to 12:59)

- (the largest Fibonacci number, such that it and all positive Fibonacci numbers less than it are deficient) + (the difference between the sum of all even numbers up to 100 and the sum of all odd numbers up to 100) − (the first digit of a four-digit square that has the first two digits the same and the last two digits the same)

- (the smallest composite Jacobsthal number) ⋅ (the only digit needed to express the number of diagonals of a convex hendecagon)/(the smallest prime divisor of 132013 + 1)

- (the smallest integer the fate of whose aliquot sequence is unknown) + (the largest amount of money in cents you can have in American coins without having change for 2 dollars) − (the repeated number in the aliquot cycle of 95) ⋅ (the second-smallest integer n such that the Russian word for n has n letters)

- (the smallest positive even integer that’s not a totient)

*****

- (the number of letters in the last name of a famous Russian writer whose year of birth many Russians use to help them memorize the digits of e)

- (the number of pluses you need to insert in a row of 20 fives so that the sum is 1000)

- (the number of positive integers less than 2013 such that not all their digits are distinct) − (the number of four-digit numbers with only odd digits) − (the largest Fibonacci square)

- (the number of positive integers n for which the sum of the n smallest positive integers evenly divides 18n)

- (the number of trailing zeroes of 2013!) − (the number of sets in the game of Set such that every feature is different on all three cards) − (an average speed in miles per hour of a person who drives somewhere with a speed of 420 miles per hour, then drives back using the same route with a speed of 210 miles per hour)

- (the smallest fortunate triangular number)

- (the smallest weird number)/(the only prime one less than a cube)

- (the third most probable product of the numbers showing when two standard six-sided dice are rolled)

*****

- (the largest integer number of dollars you can’t pay if you have an unlimited supply of 9-dollar bills and 13-dollar bills) − (the positive difference between the two prime numbers that do not share a unit digit with any other prime number)

- (the largest three-digit primeval number) − (the largest number of distinct SET cards without a set)

- (the number conjectured to be the second-largest number such that two to its power has no zeroes) − (the largest number whose cube has at most two distinct digits and no zeroes)

- (the number of 5-digit palindromic integers in base 5) + (the only positive integer that is five times the sum of its digits)

- (the only Fibonacci number that is a double of a prime) + (the only prime p such that p! has p digits) − (the only fixed point of look-and-say operation)

- (the only number whose concatenation with itself is prime)

- (the only positive integer that that differs by 1 from a square and a nonsquare cube) − (the largest number such that its divisors are each 1 less than a prime)

- (the smallest admirable number)

- (the smallest evil untouchable number)

*****

- (the alphanumeric value of MANIC SAGES) + (the sum of all three-digit numbers you can get by permuting digits 1, 2, and 3) + (the number of two-digit integers divisible by 9) − (the number of rectangles whose sides are composed of edges of squares of a chess board)

- (the integer whose standard Roman numeral representation is alphabetically later than all others) − (the number you get if you divide a three digit number with identical digits by the sum of the digits)

- (the largest even integer that is not a sum of two abundant numbers) − (the digit in the first position where e and π have the same digit)

- (the number formed by the last two digits of the sum: 1! + 2! + 3! + 4! + . . . + 2013!)

- (the only positive integer such that if you sum the digits and the squares of the digits, you get the original number back) + (the largest prime factor of the smallest Carmichael number)

- (the smallest multi-digit hyperperfect number such that more than half of its digits are the same) − (the sum of digits that cannot be the last digits of squares) ⋅ (the largest base n in which 8n is not written like 80) ⋅ (the smallest positive integer that leaves a remainder of 2 when divided by 3, 4, and 5)

- (the smallest three-digit brilliant number) − (the first decimal digit of the number that in hexadecimal gives the house number of Sherlock Holmes)

*****

- (the number of evil minutes in an hour)

- (the number of fingers on ten hands) − (the smallest number such that its square has a digit repeated three times)

- (the number of ways you can rearrange letters of MANIC)/(the number of ways you can rearrange letters of SAGES)

- (the only multi-digit Catalan number with digits in strictly decreasing order)

- (the smallest perfect number)

*****

- (the largest product of positive integers that sum up to 10) + (the smallest perimeter of a rectangle with integral sides of area 120) − (the day of the month of the second Thursday in a January that has exactly 4 Mondays and 4 Fridays)

- (the second-largest number with all distinct digits, such that all the words in its American English representation start with the same letter) + (the largest square-free composite number that contains each of the digits 1, 2, 3, 4 exactly once in its prime factorization) + (the number of ways you can flip a coin 10 times so that the number of heads is the same as the number of tails) + (the smallest positive integer such that 2 to its power contains 2013 as a substring) + (the sum of five prime numbers formed from the digits 2, 3, 5, 7, 8, 9 where each digit is used exactly once) + (the number of days in a year where the day of the month is odious) + (the sum of the digits each of which spelled out has an alphanumeric value equal to the meaning of life, the universe, and everything) ⋅ (the sum of all prime numbers p such that p + 20 and p + 40 are also prime) + (the first digit of the total number of legal moves of the Black king in chess)

- (the second-largest three-letter palindrome in Roman numerals)/((the smallest composite number not divisible by any of its digits)/(the last digit of 20132013) − (the digit in position 2013 of the string formed by concatenation of all integers into one stream: 123456789101112…)) − (the number of days in a year such that the month and the day of the month are simultaneously composite)

- (the second-smallest cube with only prime digits) ⋅ (the smallest perimeter of a Pythagorean triangle)/(the last digit to appear in the units place of a Fibonacci number) + (the greatest common divisor of the sums in degrees of the interior angles of convex polygons with an even number of sides) + (the number of subsets that you can form from the set {1,2,3,4,5,6,7,8,9} that do not contain two consecutive numbers) − (the only common digit of 2013 base 8 and base 9)