Sparsity and Computation

Once again I am one of the organizers of the Women and Math Program at the Institute for Advanced Study in Princeton, May 16-27, 2011. It will be devoted to an exciting modern subject: Sparsity and Computation.

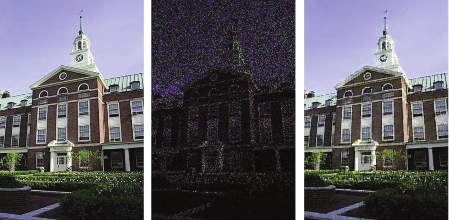

In case you are wondering about the meaning of the picture on the program’s poster (which I reproduce below), let us explain.

The left image is the original picture of Fuld Hall, the main building on the IAS campus. The middle image is a corrupted version, in which you barely see anything. The right image is a striking example of how much of the image can be reconstructed from the corrupted image using clever algorithms.

Female undergraduates, graduates and postdocs are welcome to apply to the program. You will learn exactly how the corrupted image was recovered and much more. The application deadline is February 20, 2011.

Eugene Brevdo generated the pictures for our poster and agreed to write a piece for my blog explaining how it works. I am glad that he draws parallels to food, as the IAS cafeteria is one of the best around.

by Eugene Brevdo

The three images you are looking at are composed of pixels. Each pixel is represented by three integers corresponding to red, green, and blue. The values of each integer range between 0 and 255.

The image of Fuld hall has been corrupted: some pixels have been replaced with all 0s, and are therefore black; this means the pixel was not “observed”. In this corrupted version, 85% of the pixel values were not observed. Other pixels have been modified to various degrees by stationary Gaussian noise (i.e. independent random noise). For the 15% observed pixel values, the PSNR is 6.5 db. As you can see, this is a badly corrupted image!

The really interesting image is the one on the right. It is a “denoised” and “inpainted” version of the center image. That means the pixels that were missing were filled in and the observed pixel integer values were re-estimated. The algorithm that performed this task, with the longwinded name “Nonparametric Bayesian Dictionary Learning,” had no prior knowledge about what “images should look like”. In that sense, it’s similar to popular wavelet-based denoising techniques: it does not need a prior database of images to correct a new one. It “learns” what parts of the image should look like from the original image, and fills them in.

Here’s a rough sketch of how it works. The idea is to use a new technique in probability theory — the idea that a a patch, e.g. a contiguous subset of pixels, of an image is composed of a sparse set of basic texture atoms (from the “Dictionary”). Unfortunately for us, the number of atoms and the atoms themselves are unknowns and need to be estimated (the “Nonparametric Learning” part). In a way, the main idea here is very similar to Wavelet-based estimation, because while Wavelets form a fixed dictionary, a patch from most natural images is composed of only a few Wavelet atoms; and Wavelet denoising is based on this idea.

We make two assumptions that allow us to simplify and solve this problem, which is unwieldy-sounding and vague when the texture atoms have to be estimated. First, there may be many atoms, but a single patch is a combination of only a sparse subset of them. Second, because each atom appears in part in many patches, even if we observe some noisily, once we know which atoms appear in which patches, we can invert and average together all of the patches associated with an atom to estimate it.

To explain and programmatically implement the full algorithm that solves this problem, probability theorists like to explain things in terms of going to a buffet. Here’s a very rough idea. There’s a buffet with a (possibly infinite) number of dishes. Each dish represents a texture atom. An image patch will come up to the buffet and, starting from the first dish, begins to flip a biased coin. If the coin lands on heads, the patch takes a random amount of food from the dish in front of it (the atom-patch weight), and then walks to the next dish. If the coin lands on tails, the patch skips that dish and instead just walks to the next. There it flips its coin with a different bias and repeats the process. The coins are biased so the patch only eats a few dishes (there are so many!). When all is said and done, however, the patch has eaten a random amount from a few dishes. Rephrased: the image patch is made from a weighted linear combination of basic atoms.

At the end of the day, all the patches eat their own home-cooked dessert that didn’t come from the buffet (noise), and some pass out from eating too much (missing pixels).

If we know how much of each dish (texture atom) each of the patches ate and the biases of the coins, we can estimate the dishes themselves — because we can see the noisy patches. Vice versa, if we know what the dishes (textures) are, and what the patches look like, we can estimate the biases of the coins and how much of a dish each patch ate.

At first we take completely random guesses about what the dishes look like and what the coins are, as well as how much each patch ate. But soon we start alternating guesses between what the dishes are, the coin biases, and the amounts that each patch ate. And each time we only update our estimate of one of these unknowns, on the assumption that our previous estimates for the others is the truth. This is called Gibbs sampling. By iterating our estimates, we can build up a pretty good estimate of all of the unknowns: the texture atoms, coin biases, and the atom-patch weights.

The image on the right is our best final guess, after iterating this game, as to what the patches look like after eating their dishes, but before eating dessert and/or passing out.

Share:

Leave a comment